How Ordered Action Tokenization (OAT) Makes Autoregressive Models Practical for Real-World Robotics

Autoregressive models changed the world of language. They predict the next token in a sequence with remarkable accuracy, scale beautifully with data, and power tools we use every day. For years, robotics researchers have asked a natural question:

Table Of Content

- Can we use the same idea to control robots?

- The Core Problem: Continuous Motion vs Discrete Tokens

- What is Ordered Action Tokenization?

- Why Token Ordering Matters

- Making Autoregressive Control Low-Latency

- Guaranteed Decodability = Safer Robots

- Why This Connects to Language-Model Scaling

- Real-World Implications

- A Shift in How We Think About Robot Actions

- Closing Insights

Can we use the same idea to control robots?

In theory, yes. In practice, it’s been difficult.

Robots don’t speak in words. They move through continuous actions—joint angles, velocities, torques, and trajectories that flow in real time. Turning these smooth motions into something an autoregressive model can predict (like tokens in a sentence) is not straightforward.

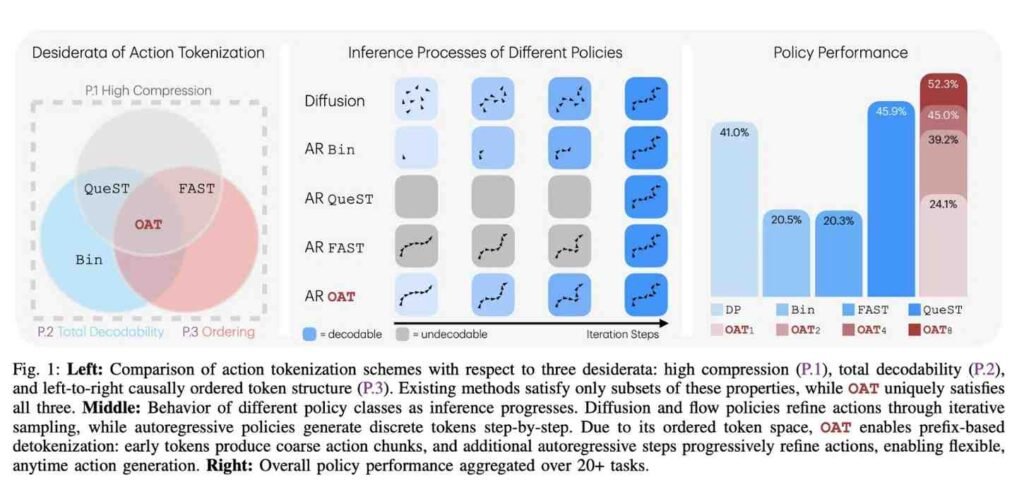

This is where Ordered Action Tokenization (OAT) becomes important. It offers a new way to represent robot actions as compact, structured, and always-decodable token sequences, making autoregressive control not just possible, but practical.

Let’s break down why this matters and how OAT bridges the gap between language-style AI scaling and real-world robotic control.

The Core Problem: Continuous Motion vs Discrete Tokens

Autoregressive models work best with discrete tokens arranged in a meaningful order:

word → word → word → sentence

But robot control looks more like:

joint angles → velocities → positions → force → time

These are continuous values, not symbols. If you naïvely convert them into tokens, you face several issues:

Too many tokens per action step (slow inference)

Poor structure (model struggles to learn dependencies)

Invalid sequences that cannot be decoded back into safe robot motions

High latency, which is unacceptable for real-time control

Earlier approaches tried simple quantization or codebooks, but they either exploded the token count or produced unstable outputs.

OAT solves this by rethinking how actions are tokenized and in what order they are generated.

What is Ordered Action Tokenization?

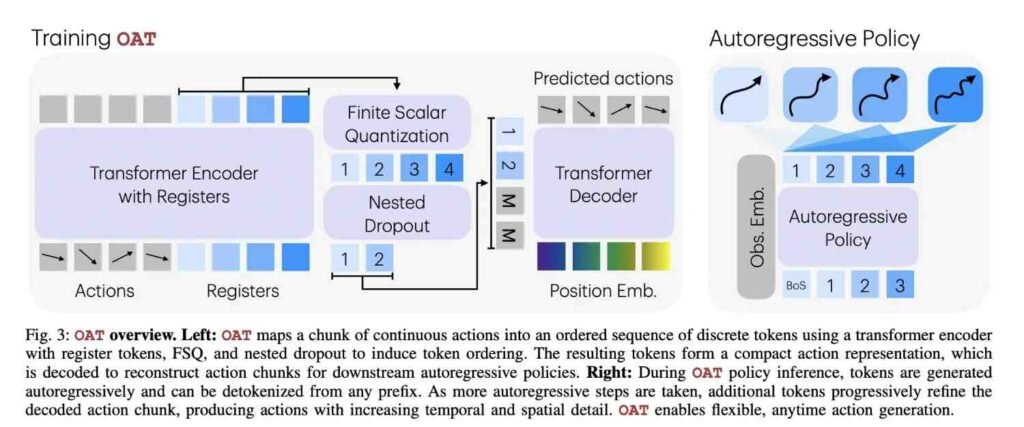

Ordered Action Tokenization is a method that converts a robot’s continuous action at each timestep into a short, causally ordered sequence of tokens with three key properties:

Compact – Very few tokens represent an entire action

Ordered – Tokens are generated in a meaningful causal order

Always Decodable – Any valid token sequence maps back to a safe action

This is crucial.

In language, if a model predicts a slightly wrong word, the sentence still makes sense.

In robotics, a slightly wrong token can cause invalid or unsafe motion.

OAT ensures that every possible token combination corresponds to a valid robot action.

Why Token Ordering Matters

The “ordered” part of OAT is not cosmetic—it’s fundamental.

Instead of predicting all parts of an action at once, the model predicts them sequentially in a logical dependency chain. For example:

First token decides the coarse motion region

Next token refines the motion direction

Next token adds precision

Final token completes the action specification

Each token conditions the next one.

This mirrors how autoregressive models already work in language and allows the model to learn structure naturally.

The result?

Better learning efficiency

Fewer tokens

Faster inference

More predictable outputs

Making Autoregressive Control Low-Latency

One major concern with autoregressive models in robotics is speed.

Robots can’t wait for 50 tokens to be generated before they move. Decisions must happen in milliseconds.

Because OAT uses very short token chains, it allows a powerful trade-off:

The robot can choose to generate fewer tokens for faster decisions, or more tokens for higher precision.

This means the same model can operate in:

Fast mode for reactive control

Precise mode for delicate manipulation

This kind of dynamic control was not possible with earlier tokenization schemes.

Guaranteed Decodability = Safer Robots

Another breakthrough with OAT is that token sequences are structurally valid by design.

No matter what the model predicts, the tokens can always be converted back into a real, executable robot action.

This removes a huge class of failure cases where:

Tokens don’t correspond to real joint configurations

Actions become physically impossible

The controller crashes or behaves unpredictably

In robotics, this reliability is more important than raw model accuracy.

Why This Connects to Language-Model Scaling

Here’s the bigger picture.

Language models scale because they operate on:

Tokens

Sequences

Autoregressive prediction

OAT allows robot control to be framed in the same paradigm.

Now we can:

Train on large robot datasets like text corpora

Use transformer architectures effectively

Benefit from scaling laws similar to LLMs

Unify perception, reasoning, and action in one model

This is a step toward generalist robot models trained the way we train language models.

Real-World Implications

With OAT-style tokenization, robots can:

Learn from massive datasets of demonstrations

Execute real-time control with low latency

Adapt precision based on task requirements

Avoid invalid or unsafe motions

Use standard autoregressive training pipelines

This makes it far easier to apply modern AI infrastructure to robotics without inventing entirely new architectures.

A Shift in How We Think About Robot Actions

Traditionally, robot control focused on:

Continuous control signals → classical controllers → optimization

OAT reframes the problem as:

Structured token prediction → autoregressive model → decoded action

This is a conceptual shift, not just a technical trick.

It treats robot motion as a language of actions that can be learned, predicted, and scaled.

Closing Insights

Ordered Action Tokenization doesn’t just improve token efficiency. It unlocks the ability to apply language-model style learning to robotics in a safe, fast, and structured way.

By ensuring compact tokens, meaningful ordering, and guaranteed decodability, OAT makes autoregressive control viable for real-world robots.

As robotics moves toward foundation models and large-scale training, approaches like OAT may become the bridge that finally connects how we train AI for language with how we control machines in the physical world.